If you followed my earlier guide on how to install FreeBSD onto a pure ZFS system, or even just shamelessly copied some of the ideas[1], you may be wondering where, exactly, are the benefits of using boot environments? This article explains all. How to use the superior capabilities of ZFS at snapshotting and cloning in order to do system upgrades in a safe and reversible way and without eating up any more disk space than is really necessary.

While this article shows the work being done on a FreeBSD 9.0 system, the ideas here should be applicable to any FreeBSD version with sufficient ZFS capabilities to create the zroot/ROOT ZFSes and do the mountpoint juggling. I've personally used these ideas to upgrade a stable/8 system to stable/9 — one of the least exciting major version updates I've ever done. Which is a good thing.

However, it hasn't been tested on anything earlier than stable/8 as of January 2012, so adopt appropriate levels of caution when working elsewhere.

[1] Not that there's anything wrong with copying. Ideas exist to be copied, and doing so is certainly no cause for shame, so long as you give proper credit to the originators.

The filesystem layout and methodology used here is heavily based on the following articles:

The whole concept of boot environments comes from Oracle (née Sun):

To illustrate the pocess, I shall run through an update on a virtual machine which I have been managing using these techniques for some time. Initially the machine has two boot environments configured — the one currently in use and the previous one, which the current one was created from.

# ./manageBE list Poolname: zroot BE Active Active Mountpoint Space Name Now Reboot - Used ---- ------ ------ ---------- ----- STABLE_9_20120317 yes yes / 1.47G STABLE_9_20120310 no no /ROOT/STABLE_9_20120310 615M Used by BE snapshots: 1.20G

As you can see, my naming convention for boot environments is based on the SVN branch (stable/9) and the date when the upgrade process was started. The date marks when the latest available system sources in /usr/src were obtained rather than when the actual upgrade was performed. However, the naming convention is entirely arbitrary. Use whatever makes sense to you.

This system was originally installed according to my install on ZFS article. The partition layout looks like this:

# df -h Filesystem Size Used Avail Capacity Mounted on zroot/ROOT/STABLE_9_20120317 48G 1.5G 47G 3% / devfs 1.0k 1.0k 0B 100% /dev zroot/ROOT/STABLE_9_20120310 48G 1.6G 47G 3% /ROOT/STABLE_9_20120310 zroot/ROOT/STABLE_9_20120310/usr/obj 49G 2.5G 47G 5% /ROOT/STABLE_9_20120310/usr/obj zroot/ROOT/STABLE_9_20120310/usr/src 48G 1.2G 47G 3% /ROOT/STABLE_9_20120310/usr/src zroot/home 47G 54M 47G 0% /home zroot/tmp 47G 53k 47G 0% /tmp zroot/usr/local 47G 377M 47G 1% /usr/local zroot/ROOT/STABLE_9_20120317/usr/obj 49G 2.5G 47G 5% /usr/obj zroot/usr/ports 47G 249M 47G 1% /usr/ports zroot/usr/ports/distfiles 47G 268M 47G 1% /usr/ports/distfiles zroot/usr/ports/packages 47G 92M 47G 0% /usr/ports/packages zroot/ROOT/STABLE_9_20120317/usr/src 48G 1.2G 47G 3% /usr/src zroot/var 47G 2.3M 47G 0% /var zroot/var/crash 47G 31k 47G 0% /var/crash zroot/var/db 47G 194M 47G 0% /var/db zroot/var/db/pkg 47G 2.1M 47G 0% /var/db/pkg zroot/var/empty 47G 31k 47G 0% /var/empty zroot/var/log 47G 567k 47G 0% /var/log zroot/var/mail 47G 31k 47G 0% /var/mail zroot/var/run 47G 57k 47G 0% /var/run zroot/var/tmp 47G 31k 47G 0% /var/tmp

This is typicaly the normal working state of the system in between updating episodes. All of the old ZFSes from the previous boot environment (STABLE_9_20120310) are mounted beneath /ROOT, while the ZFSes from the current environment (STABLE_9_20120317) are mounted as the active /, /usr/src and /usr/obj directories. The previous boot environment should be considered read only, although i haven't gone as far as changing ZFS properties to enforce that. Quite apart from anything else, should I need to switch to the old boot environment in an emergency, having to flip the readonly property is an extra hassle I don't want in the heat of the moment.

As the essence of this strategy is not scribbling on known working systems I need a clone of the existing system to work on. I could do this using the normal zfs snaphot -r zroot/ROOT/STABLE_9_20120317@STABLE_9_20120321 and then zfs clone zroot/ROOT/STABLE_9_20120317@STABLE_9_20120321 zroot/ROOT/STABLE_9_20120321 sequence, but that's for too much typing. Instead I shall use the manageBE script written by Philipp Wuensch.

# ./manageBE create -n STABLE_9_20120321 -s STABLE_9_20120317 -p zroot ./manageBE: cannot create /zroot/ROOT/STABLE_9_20120321/boot/loader.conf: No such file or directory ./manageBE: cannot create /zroot/ROOT/STABLE_9_20120321/boot/loader.conf: No such file or directory ./manageBE: cannot create /zroot/ROOT/STABLE_9_20120321/etc/fstab: No such file or directory ./manageBE: cannot create /zroot/ROOT/STABLE_9_20120321/etc/fstab: No such file or directory ./manageBE: cannot create /zroot/ROOT/STABLE_9_20120321/etc/fstab: No such file or directory ./manageBE: cannot create /zroot/ROOT/STABLE_9_20120321/etc/fstab: No such file or directory ./manageBE: cannot create /zroot/ROOT/STABLE_9_20120321/etc/fstab: No such file or directory The new Boot-Environment is ready to be updated and/or activated.

Despite all the alarming error messages this has achieved the desired effect. The new filesystems are mounted under /ROOT.

# df -h Filesystem Size Used Avail Capacity Mounted on zroot/ROOT/STABLE_9_20120317 48G 1.5G 47G 3% / devfs 1.0k 1.0k 0B 100% /dev zroot/ROOT/STABLE_9_20120310 48G 1.6G 47G 3% /ROOT/STABLE_9_20120310 zroot/ROOT/STABLE_9_20120310/usr/obj 49G 2.5G 47G 5% /ROOT/STABLE_9_20120310/usr/obj zroot/ROOT/STABLE_9_20120310/usr/src 48G 1.2G 47G 3% /ROOT/STABLE_9_20120310/usr/src zroot/home 47G 54M 47G 0% /home [...] zroot/var/tmp 47G 31k 47G 0% /var/tmp zroot/ROOT/STABLE_9_20120321 48G 1.5G 47G 3% /ROOT/STABLE_9_20120321 zroot/ROOT/STABLE_9_20120321/usr 47G 33k 47G 0% /ROOT/STABLE_9_20120321/usr zroot/ROOT/STABLE_9_20120321/usr/obj 49G 2.5G 47G 5% /ROOT/STABLE_9_20120321/usr/obj zroot/ROOT/STABLE_9_20120321/usr/src 48G 1.2G 47G 3% /ROOT/STABLE_9_20120321/usr/src

There are a couple of nits that need cleaning up. Really I should fix up the manageBE script to deal with them, but I haven't got round to doing that yet.

loader.conf in the new environment needs to be modified to tell the kernel to mount the root from itself. This is absolutely vital.

# cd /ROOT/STABLE_9_20120321/boot

# ${EDITOR} loader.conf

Modify the vfs.root.mountfrom property to reference the new boot environment:

# diff -u loader.conf{~,}

--- loader.conf~ 2012-03-17 08:49:33.782414902 +0000

+++ loader.conf 2012-03-21 09:15:06.262039252 +0000

@@ -1,4 +1,4 @@

-vfs.root.mountfrom="zfs:zroot/ROOT/STABLE_9_20120317"

+vfs.root.mountfrom="zfs:zroot/ROOT/STABLE_9_20120321"

zfs_load="YES"

opensolaris_load="YES"

The other file mentioned in those error messages, fstab, doesn't need any changes.

The ZFS snapshot-and-clone dance doesn't carry over the properties from the original ZFSes exactly. The one significant casualty is the canmount property. Initially we have canmount turned off here:

# zfs get canmount zroot/ROOT/STABLE_9_20120317/usr NAME PROPERTY VALUE SOURCE zroot/ROOT/STABLE_9_20120317/usr canmount off local

After creating the new boot environment, the property of the equivalent ZFS ends up in the default (ie. on) state.

# zfs get canmount zroot/ROOT/STABLE_9_20120321/usr NAME PROPERTY VALUE SOURCE zroot/ROOT/STABLE_9_20120321/usr canmount on default

The fix is easy.

# zfs set canmount=off zroot/ROOT/STABLE_9_20120321/usr

Failure to deal with this would mean that large parts of /usr were inaccessible on reboot, which would be no fun at all.

Note that I have created separate, per-boot environment ZFSes to be mounted as /usr/src and /usr/obj. This is entirely because I intend to update the system by compiling from source.

# freebsd-update -b /ROOT/9.0-RELEASE-p1 upgrade

Back to the original concept of compiling the system. While it is possible to work with the system sources located anywhere in the directory tree, it is much easier to shuffle the ZFSes so that the relevant bits of the new boot environment are mounted at /usr/src and /usr/obj. This is where the cunning use of canmount=off above pays off.

# zfs inherit mountpoint zroot/ROOT/STABLE_9_20120317/usr # zfs set mountpoint=/usr zroot/ROOT/STABLE_9_20120321/usr

This results in the following layout:

# df -h Filesystem Size Used Avail Capacity Mounted on zroot/ROOT/STABLE_9_20120317 48G 1.5G 47G 3% / devfs 1.0k 1.0k 0B 100% /dev zroot/ROOT/STABLE_9_20120310 48G 1.6G 47G 3% /ROOT/STABLE_9_20120310 zroot/ROOT/STABLE_9_20120310/usr/obj 49G 2.5G 47G 5% /ROOT/STABLE_9_20120310/usr/obj zroot/ROOT/STABLE_9_20120310/usr/src 48G 1.2G 47G 3% /ROOT/STABLE_9_20120310/usr/src zroot/home 47G 54M 47G 0% /home [...] zroot/var/tmp 47G 31k 47G 0% /var/tmp zroot/ROOT/STABLE_9_20120321 48G 1.5G 47G 3% /ROOT/STABLE_9_20120321 zroot/ROOT/STABLE_9_20120317/usr/obj 49G 2.5G 47G 5% /ROOT/STABLE_9_20120317/usr/obj zroot/ROOT/STABLE_9_20120317/usr/src 48G 1.2G 47G 3% /ROOT/STABLE_9_20120317/usr/src zroot/ROOT/STABLE_9_20120321/usr/obj 49G 2.5G 47G 5% /usr/obj zroot/ROOT/STABLE_9_20120321/usr/src 48G 1.2G 47G 3% /usr/src

"Hold on" I hear the more astute amongst you cry, "Isn't zroot/ROOT/STABLE_9_20120317 mounted at the root? How on earth does it work to have zroot/ROOT/STABLE_9_20120317/usr inherit the mountpoint property from there?" Indeed, yes, you have put your finger on a particularly crucial point. It all depends on slightly magical behaviour and probably is not something you should mention in polite company. However, it works, which is the important thing. Pay attention now, as this is the basis of the whole scheme of managing boot environments.

The root partition is special, and that lets us get away with taking certain liberties with mountpoints. It works because the ZFS mounted as / is mounted at an early stage in the boot process according to the properties in the kernel environment, bypassing the normal ZFS mechanisms.

So this:

# kenv vfs.root.mountfrom zfs:zroot/ROOT/STABLE_9_20120317

overrides this:

# zfs get mountpoint zroot/ROOT/STABLE_9_20120317 NAME PROPERTY VALUE SOURCE zroot/ROOT/STABLE_9_20120317 mountpoint /ROOT/STABLE_9_20120317 inherited from zroot

However the mountpoint property is still inherited in the usual way for child ZFSes in that heirarchy. So they get mounted somewhere under /ROOT unless you change the mountpoint setting locally. All of the ZFSes for the different boot environments should be set up so their natural mountpoints are in distinct directory trees under /ROOT. This keeps them tidily out of the way when not in use. The sole exceptions in this scheme are the ZFSes mounted on /usr/src and /usr/obj, which are only relevant when building the system from source.

If this is the first upgrade to your system after installing it according to the earlier version of my install-on-zfs article, double check the mountpoint property on the root partition of your live boot environment. It will probably be set to /, rather than inheriting from zroot/ROOT. Having the mountpoint of an old boot environment set to / will result in your root partition being overlaid and much gratuitous weirdness.

The instructions have been fixed in the current version of the article. Unfortunately, if you have this problem, you can't fix it simply by changing the mountpoint property while that ZFS is mounted as your root filesystem. Instead, you should follow the rest of the instructions in this article, until you get to the stage of rebooting into the updated system. Instead of letting the system boot to multiuser, hit s at the logo-menu screen and boot into single user mode. Change the mountpoint property on the former root ZFS to inherit and then type ^D to carry on booting normally. This is a one time fix. Once done, in future you would be able to just reboot without any similar palaver.

Use whatever mechanism you prefer for obtaining up-to-date system sources. There's plenty of discussion of this in the Updating and upgrading chapter of the Handbook. I'm going to use svn.

# cd /usr/src # svn up Updating '.': [...] U sys/sys/systm.h U sys Updated to revision 233275.

Then go ahead and build the system as usual[2]:

# make buildworld buildkernel -------------------------------------------------------------- >>> World build started on Wed Mar 21 09:40:53 GMT 2012 -------------------------------------------------------------- -------------------------------------------------------------- >>> Rebuilding the temporary build tree -------------------------------------------------------------- rm -rf /usr/obj/usr/src/tmp [...] objcopy --strip-debug --add-gnu-debuglink=zlib.ko.symbols zlib.ko.debug zlib.ko -------------------------------------------------------------- >>> Kernel build for WORM completed on Wed Mar 21 18:58:03 GMT 2012 --------------------------------------------------------------

[2] Yes, this did take an inordinately long time. It's running on a virtual machine with limited resources in VirtualBox on a Mac Book. It's pretty slow.

Other than the unusual target location, installing the newly built system is done as normal. Installing to a directory tree rooted at an arbitrary point is well supported and works entirely smoothly. Simply set DESTDIR to the mountpoint of the new boot environment on the make command line. Other standard parts of the update process that use make work in exactly the same way.

# make installworld installkernel DESTDIR=/ROOT/STABLE_9_20120321 [...] ===> zlib (install) install -o root -g wheel -m 555 zlib.ko /ROOT/STABLE_9_20120321/boot/kernel install -o root -g wheel -m 555 zlib.ko.symbols /ROOT/STABLE_9_20120321/boot/kernel kldxref /ROOT/STABLE_9_20120321/boot/kernel # make check-old DESTDIR=/ROOT/STABLE_9_20120321 >>> Checking for old files >>> Checking for old libraries >>> Checking for old directories To remove old files and directories run 'make delete-old'. To remove old libraries run 'make delete-old-libs'.

Similarly, merging in changes to init scripts and other configuration files, mostly in /ROOT/STABLE_9_20120321/etc is pretty routine. It is not completely smooth, as mergemaster expects to both use resources and to write files to locations under /ROOT/STABLE_9_20120321/var — the installworld step above will actually create that directory but /var should be on a separate ZFS not managed as part of any boot environment.

# mergemaster -Ui -D /ROOT/STABLE_9_20120321

*** Unable to find mtree database (/ROOT/STABLE_9_20120321/var/db/mergemaster.mtree).

Skipping auto-upgrade on this run.

It will be created for the next run when this one is complete.

*** Press the [Enter] or [Return] key to continue

[...]

This is usually tolerable: not having an mtree database doesn't make a huge amount of difference unless you have extensive customization of your configuration, or you are upgrading over a large delta in version numbers. In which case, you could simply copy the mtree database before running mergemaster.

You'll get some more complaints at the end of the mergemaster run.

*** You chose the automatic install option for files that did not

exist on your system. The following were installed for you:

/ROOT/STABLE_9_20120321/var/crash/minfree

/ROOT/STABLE_9_20120321/var/named/etc/namedb/master/empty.db

/ROOT/STABLE_9_20120321/var/named/etc/namedb/master/localhost-forward.db

/ROOT/STABLE_9_20120321/var/named/etc/namedb/master/localhost-reverse.db

/ROOT/STABLE_9_20120321/var/named/etc/namedb/named.conf

/ROOT/STABLE_9_20120321/var/named/etc/namedb/named.root

*** There is no /ROOT/STABLE_9_20120321/var/db/zoneinfo file to update /ROOT/STABLE_9_20120321/etc/localtime.

You should run tzsetup

Would you like to run it now? y or n [n]

*** Cancelled

Make sure to run tzsetup -C /ROOT/STABLE_9_20120321 yourself

The files that mergemaster installs into /ROOT/STABLE_9_20120321/var and the fact that there is no /ROOT/STABLE_9_20120321/var/db/zoneinfo file should not cause any real difficulties. In fact, as /ROOT/STABLE_9_20120321/var would be overlaid by zroot/var and children, and hence inaccessible once we've booted into the new environment, there is no point in using up the space to store them. There will be equivalent files in zroot/var that the live system uses. Similarly for /ROOT/STABLE_9_20120321/dev which will be overlaid by a devfs instance.

# cd /ROOT/STABLE_9_20120321/ # rm -rf dev # rm -rf var rm: var/empty: Operation not permitted rm: var: Directory not empty # chflags -R 0 var # rm -rf var

We could simply rerun mergemaster after reboot to catch any edge cases involving files under /var.

The contents of the new boot environment are all ready to use. It only remains to activate the new boot environment and reboot into it.

After all the work that has been done, the list of boot environments looks like this:

# ./manageBE list Poolname: zroot BE Active Active Mountpoint Space Name Now Reboot - Used ---- ------ ------ ---------- ----- STABLE_9_20120321 no no /ROOT/STABLE_9_20120321 145M STABLE_9_20120317 yes yes / 1.59G STABLE_9_20120310 no no /ROOT/STABLE_9_20120310 615M Used by BE snapshots: 1.99G

We need to activate the new boot environment:

# ./manageBE activate -n STABLE_9_20120321 -p zroot

This is not simply a matter of marking the new boot environment to be used for the root partition on the next reboot. The manageBE script also inverts the relation between the zroot/ROOT/STABLE_9_20120321 and zroot/ROOT/STABLE_9_20120317. Originally zroot/ROOT/STABLE_9_20120321 was a clone of a snapshot of zroot/ROOT/STABLE_9_20120317. Because of the copy-on-write block structure of ZFS, it is easy to rearrange things so that zroot/ROOT/STABLE_9_20120317 is effectively a clone of a snapshot of zroot/ROOT/STABLE_9_20120321. Think of it as primarily moving all the unchanged filesystem blocks common to both boot environments from the ownership of the earlier boot environment to the new one.

This rearrangement produces a result like this:

# ./manageBE list Poolname: zroot BE Active Active Mountpoint Space Name Now Reboot - Used ---- ------ ------ ---------- ----- STABLE_9_20120321 no yes /ROOT/STABLE_9_20120321 1.47G STABLE_9_20120317 yes no / 602M STABLE_9_20120310 no no /ROOT/STABLE_9_20120310 615M Used by BE snapshots: 1.79G

As you can see, the new boot environment is all set to activate on the next reboot. So lets do that:

# shutdown -r now

Unless you've been hit by the problem with mountpoint properties described above, or are operating in hyper-cautious mode, there is no need to worry about going into a single user shell. The usually recommended cautious upgrade approach of ensuring that the new kernel boots correctly before upgrading the world is less important. We have a complete world+kernel boot environment available to fall back on if it all goes horribly wrong. See below where I discuss what to do in that case.

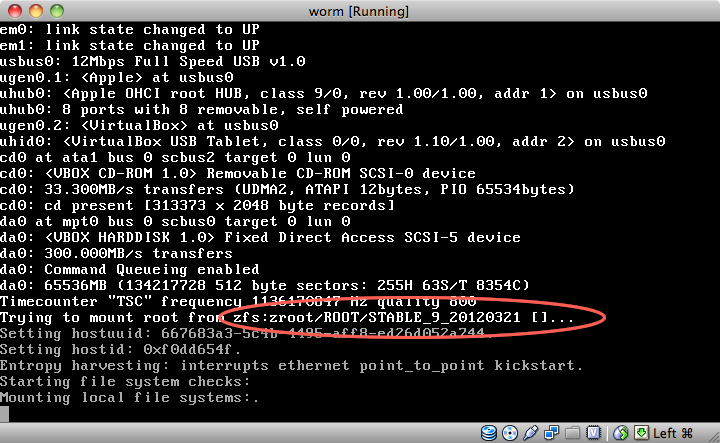

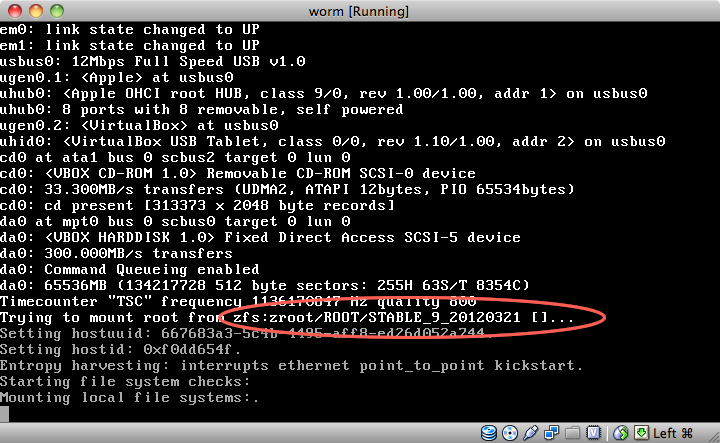

As the system comes back up, watch the output from the kernel. You want to see that the root is being mounted from the new boot environment.

Here's the expected result. One updated system running from the new boot environment. For minor upgrades, that's all you need to do. For a major version upgrade, this is where you'ld begin to rebuild all the ports.

# df -h Filesystem Size Used Avail Capacity Mounted on zroot/ROOT/STABLE_9_20120321 44G 1.5G 42G 3% / devfs 1.0k 1.0k 0B 100% /dev zroot/ROOT/STABLE_9_20120310 44G 1.6G 42G 4% /ROOT/STABLE_9_20120310 zroot/ROOT/STABLE_9_20120310/usr/obj 45G 2.5G 42G 5% /ROOT/STABLE_9_20120310/usr/obj zroot/ROOT/STABLE_9_20120310/usr/src 44G 1.2G 42G 3% /ROOT/STABLE_9_20120310/usr/src zroot/ROOT/STABLE_9_20120317 44G 1.6G 42G 4% /ROOT/STABLE_9_20120317 zroot/ROOT/STABLE_9_20120317/usr/obj 45G 2.5G 42G 5% /ROOT/STABLE_9_20120317/usr/obj zroot/ROOT/STABLE_9_20120317/usr/src 44G 1.2G 42G 3% /ROOT/STABLE_9_20120317/usr/src zroot/home 43G 54M 42G 0% /home zroot/tmp 42G 53k 42G 0% /tmp zroot/usr/local 43G 376M 42G 1% /usr/local zroot/ROOT/STABLE_9_20120321/usr/obj 45G 2.5G 42G 5% /usr/obj zroot/usr/ports 43G 249M 42G 1% /usr/ports zroot/usr/ports/distfiles 43G 268M 42G 1% /usr/ports/distfiles zroot/usr/ports/packages 43G 92M 42G 0% /usr/ports/packages zroot/ROOT/STABLE_9_20120321/usr/src 44G 1.3G 42G 3% /usr/src zroot/var 42G 2.4M 42G 0% /var zroot/var/crash 42G 31k 42G 0% /var/crash zroot/var/db 43G 194M 42G 0% /var/db zroot/var/db/pkg 42G 2.1M 42G 0% /var/db/pkg zroot/var/empty 42G 31k 42G 0% /var/empty zroot/var/log 42G 556k 42G 0% /var/log zroot/var/mail 42G 31k 42G 0% /var/mail zroot/var/run 42G 54k 42G 0% /var/run zroot/var/tmp 42G 32k 42G 0% /var/tmp

Using ZFS to manage boot environments like this is pretty economical with disk space. The way snapshotting and cloning works in ZFS means that you only use extra space for the files that have changed between the two environments. Even so, accumulating too many old boot environments is going to make the place look cluttered.

After our upgrading efforts, the list of boot environments looks like this:

# ./manageBE list Poolname: zroot BE Active Active Mountpoint Space Name Now Reboot - Used ---- ------ ------ ---------- ----- STABLE_9_20120321 yes yes / 1.47G STABLE_9_20120317 no no /ROOT/STABLE_9_20120317 602M STABLE_9_20120310 no no /ROOT/STABLE_9_20120310 615M Used by BE snapshots: 1.19G

I generally only keep the last two boot environments on this test system, so let's get rid of STABLE_9_20120310. The manageBE script does all the heavy lifting. One note: the meaning of -o flag is a little obscure: I had to read the code of the script to work out that setting it to no means "destroy only the cloned ZFSes for that boot environment." yes means "destroy the snapshots they are based on too," which is appropriate here where we did all the snapping and cloning specifically for the purpose of upgrading. It is possible to use manageBE to take over a snapshot of your root ZFS you created for some other purpose, and turn that into a new boot environment. In which case, you would probably want to keep the snapshot.

# ./manageBE delete -n STABLE_9_20120310 -p zroot -o yes # ./manageBE list Poolname: zroot BE Active Active Mountpoint Space Name Now Reboot - Used ---- ------ ------ ---------- ----- STABLE_9_20120321 yes yes / 1.47G STABLE_9_20120317 no no /ROOT/STABLE_9_20120317 604M Used by BE snapshots: 604M

So far I've assumed everything went pretty much to plan. For routine minor version upgrades it almost certainly will. If you're changing your kernel configuration or doing a major upgrade, well things can and do go wrong. Or there are various places mentioned in the text where a missed step can have catastrophic results. You will need console access to fix the worst of these problems. As good as ZFS is, it cannot entirely do away with that necessity. While it can make a routine upgrade a console login free affair, it would be foolish to proceed without that access being available.

Generally, a mistake in the procedure above would have pretty obvious and immediate consequences, which you could rectify as you went along. If necessary it is simple enough to just wipe out the new boot environment and start again from square one.

One slightly tricky problem mentioned above would be forgetting to update loader.conf to cause the kernel to use the new boot environment for its root filesystem. You'ld end up with a new kernel, but an old world: a combination that usually, but not always, works well enough that you could simply log in, edit /ROOT/STABLE_9_20120321/boot/loader.conf and then just reboot again. If kernel and world really don't work together, then you still have the option of backing-out to the previous boot environment and applying the same fix from there. Vide infra.

Forgetting to set canmount=off on zroot/ROOT/STABLE_9_20120321/usr has an interesting effect. It renders almost everything under /usr inaccessible, except for /usr/local, /usr/ports, /usr/src or the other separate ZFSes. Fortunately the zfs command and all relevant shared libraries are in /sbin or /lib so you can easily run

# zfs set canmount=off zroot/ROOT/STABLE_9_20120321/usr

Even if you did somehow get to the state of trying to reboot without canmount=off set on that ZFS, the problem is easily fixed by running the above command in single user mode. Console access is necessary to work in single-user mode, of course.

If the kernel fails to boot up at all, then you need to switch back to the previous boot environment. Unfortunately, this is a Catch22 situation. The essential part of switching between boot environments is setting the bootfs property on the zpool[3].

# zpool set bootfs=zroot/ROOT/STABLE_9_20120317 zroot

Of course, to do this requires that you have the system booted up to at least single user mode. To fix the boot process, you need to have successfully booted the system. Catch22. Besides, if the system will boot to single user, then you can probably fix the problems and don't need to back out to the old boot environment in any case.

There are two ways of dealing with this:

The second option is what I prefer: using ZFS with boot environments makes it practicable, since it minimizes the effort needed to switch back to an older, known good system. Even better would be the capability to override the bootfs property from the loader, but that's too big an ask I think.

[3]manageBE does basicly this when activating a boot environment but it also promotes the ZFSes for the selected boot environment which is not essential for being able to boot the system.